Free AI Checker Guide 2025: Which Detector to Trust, and When Not to Trust the Score

A source-backed guide to free AI checker tools. Compare Scribbr, GPTZero, Grammarly, Ahrefs, Copyleaks, and Turnitin, then use the AI Checker Confidence Ladder to decide what a score actually means.

Nano Banana Pro

4K图像官方2折Google Gemini 3 Pro Image · AI图像生成

已服务 10万+ 开发者Free AI Checker Guide 2025: Which Detector to Trust, and When Not to Trust the Score

If you pasted an essay, article, or client draft into a free AI checker and got a scary number back, the real problem is not just "which detector is best." The harder question is whether that number should change what you do next. A self-check before submission is one thing. A classroom accusation, editorial rejection, or client dispute is something else entirely.

This guide is built for that real decision. Instead of inventing another "we tested 15 detectors and found one winner" story, it uses current official product pages, current platform guidance, and current research to answer two practical questions: which free AI checker is worth starting with, and when should human evidence outrank the score.

TL;DR

- If you want a truly free first-pass checker for essays, start with Scribbr for convenience or GPTZero for process evidence. Scribbr currently allows unlimited free checks up to 1,200 words with no sign-up(Scribbr, 2026-03-18). GPTZero currently gives students up to 10,000 free words per month and adds Writing Replay / Origin, which is often more useful than a second score(GPTZero, 2026-03-18).

- If you want a quick scan for marketing or blog copy, Ahrefs and Grammarly are the fastest low-friction starting points. Ahrefs currently limits the free detector to 2,048 characters(Ahrefs, 2026-03-18). Grammarly currently markets a 99% detection claim and RAID benchmark leadership, but that should be treated as a vendor claim, not courtroom-grade certainty(Grammarly, 2026-03-18).

- If the outcome could affect grades, contracts, or publication decisions, a free checker is only a weak signal. Turnitin says its AI report should not be the sole basis for adverse actions and does not surface scores below 20% because false positives are more likely there(Turnitin Guides, 2026-03-18).

- Research remains cautionary. OpenAI removed its own AI text classifier in July 2023 because of low accuracy(OpenAI, 2026-03-18), and NAACL Findings 2025 reports that detectors can perform poorly in some settings and can be evaded with moderate attacks(Tufts et al., 2026-03-18).

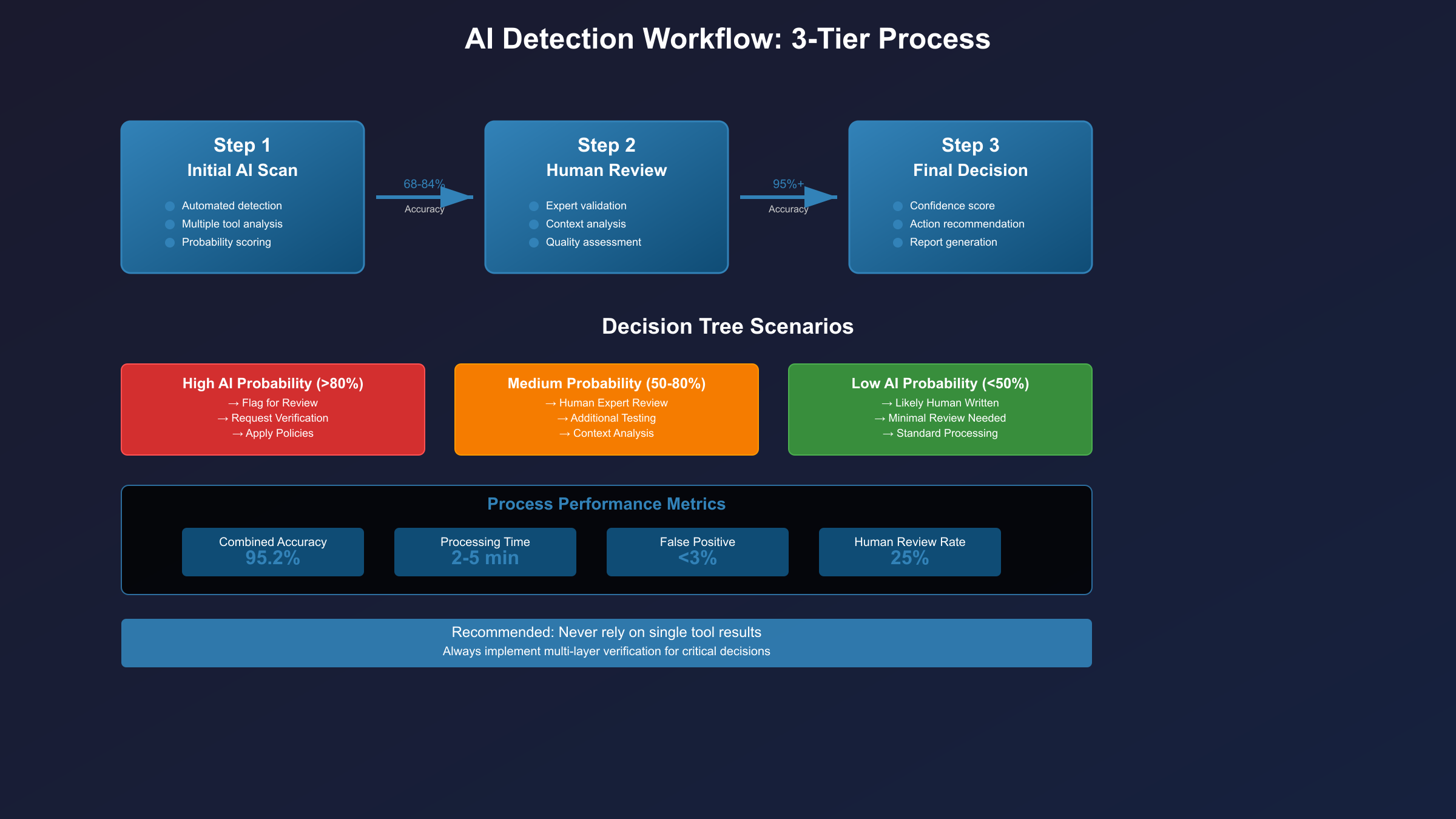

- The right workflow is: use a free checker for triage, add a second detector for medium-risk review, and require process evidence plus human judgment for high-risk decisions.

Which free AI checker should you trust first?

The short answer is that you should trust different tools for different jobs. There is no single free AI checker that deserves to be treated as a final referee across essays, articles, multilingual content, and institutional misconduct reviews. The best starting point depends on whether you are self-checking, reviewing someone else’s work, or making a decision with consequences.

If you are a student doing a low-risk self-check on an essay or reflection, Scribbr is the easiest frictionless starting point because it is genuinely free for repeated short checks and does not ask for a sign-up at the door(Scribbr, 2026-03-18). GPTZero is the better first stop when you think you may need to show how the document was written, because Writing Replay and Origin give you process evidence instead of just one more probability score(GPTZero, 2026-03-18).

If you are scanning short-form website copy or marketing text, Ahrefs and Grammarly are more useful than heavier academic workflows. Ahrefs is fast and simple, but its short input cap means it is better for snippets than long documents(Ahrefs, 2026-03-18). Grammarly is easy to reach and highly extractable in search results, but its strongest claims come from its own marketing page, so it is better treated as a convenient screen than as an independent final judge(Grammarly, 2026-03-18).

If you are handling multilingual editorial review or team workflows, free checkers stop being enough very quickly. That is where tools such as Copyleaks or an institutional system such as Turnitin start to make more sense. The key distinction is not "free versus paid" in the abstract. It is whether you only need a weak signal, or whether you need a defensible review trail.

| Scenario | Best place to start | Why |

|---|---|---|

| Student self-checking a short paper | Scribbr or GPTZero | Low friction, good for first-pass review |

| Teacher or editor reviewing a suspicious draft | GPTZero plus a second detector | Better to combine a score with process evidence |

| Marketer checking short web copy | Ahrefs or Grammarly | Fast scan for low-stakes triage |

| Content team reviewing multilingual output | Copyleaks | Better fit for workflow and language coverage |

| School using LMS-based review | Turnitin inside policy workflow | Useful only when paired with process and human review |

The AI Checker Confidence Ladder: what to do with the score

The biggest mistake people make is assuming that every detector score means the same thing. It does not. A 78% score on a self-check tool for a marketing paragraph should not trigger the same reaction as a Turnitin report inside a formal school process. The score only becomes useful when you pair it with the risk level of the decision.

| Risk level | Typical context | What the score means | What you should do next |

|---|---|---|---|

Low-risk self-check | Student preview, draft cleanup, internal self-review | Weak signal only | Use the result to decide whether to edit or review again; do not treat it as proof |

Medium-risk review | Editor screening a submission, manager checking a deliverable | Possible risk indicator | Add second detector and review structure, sources, and revision history |

High-risk decision | Academic misconduct review, client dispute, formal rejection | Insufficient on its own | Require process evidence plus human review, policy context, and document history |

This ladder is the core rule that most "best AI detector" pages still miss. Free AI checkers are useful as triage. They are weak at adjudication. That distinction is consistent with current product and platform guidance, not just with academic criticism. Turnitin says its own AI writing report should not be used as the sole basis for adverse actions, and it no longer surfaces scores from 1% to 19% because that range is more vulnerable to false positives(Turnitin Guides, 2026-03-18).

Once you adopt that ladder, detector shopping gets much easier. You stop asking "Which tool is magically accurate?" and start asking "Which tool gives me the right level of signal for this decision?"

The best free AI checkers for students, editors, and content teams

Scribbr is the easiest recommendation for readers who want a real free checker instead of a gated trial. Its current positioning is simple: unlimited free checks, no sign-up required, and up to 1,200 words per check(Scribbr, 2026-03-18). That makes it a strong fit for students who want to paste a paragraph, abstract, or short essay section and see whether the wording looks overly synthetic.

GPTZero is the better recommendation when you think you may need to defend your authorship, not just inspect the final text. Its current student-facing offer says the free plan includes 10,000 words per month, and its Writing Replay / Origin tooling is designed to show how the text was produced over time(GPTZero, 2026-03-18). That matters because a writing history can be stronger evidence than another detector score.

Grammarly is convenient because it sits inside a writing assistant users already know. Its AI detector page currently markets a 99% accuracy claim and says it ranks first on the RAID benchmark(Grammarly, 2026-03-18). That makes it a persuasive search result, but readers should still interpret the claim carefully. Benchmarks and marketing copy can tell you that a tool is serious; they do not automatically mean the tool should decide a grade dispute or publication decision on its own.

Ahrefs is the cleanest choice for quick, low-stakes web-copy checks. It is useful when you want a fast answer on a product description, landing-page paragraph, or brief article intro. The tradeoff is that its current detector is capped at 2,048 characters(Ahrefs, 2026-03-18), so it is best understood as a snippet checker rather than a full workflow solution.

Copyleaks belongs in this conversation because many readers searching for a "free AI checker" eventually discover that the real decision is whether they need a workflow tool. Copyleaks is less of a pure free-tool answer and more of an upgrade path for multilingual or team use, but its public detector still matters because it currently allows scans up to 25,000 characters without logging in(Copyleaks, 2026-03-18). Its current product and documentation pages also emphasize AI detection across 30+ languages, including Simplified and Traditional Chinese(Copyleaks, 2026-03-18). That is why it shows up later in this guide than Scribbr or GPTZero.

Turnitin is not a casual self-serve free checker, but it still matters because many students and teachers encounter AI detection through Turnitin rather than through standalone tools. Its value comes from being embedded in policy, LMS workflows, and institutional review. Its limit is equally important: Turnitin explicitly says the report is not enough on its own for adverse action(Turnitin Guides, 2026-03-18). It also only generates an AI writing report when a file contains at least 300 words of prose, does not exceed 30,000 words, and is written in a supported language such as English, Spanish, or Japanese(Turnitin Guides, 2026-03-18).

What the latest research and official docs actually say about detector accuracy

The most important thing to understand is that the reliability debate did not end when commercial detector pages became more polished. It is still live. OpenAI itself removed its own AI classifier in July 2023 because of a low rate of accuracy(OpenAI, 2026-03-18), and the original OpenAI note is still worth reading because it states the limitation directly. That does not prove all modern detectors are equally weak, but it is a strong reminder that AI-text provenance remains an unsolved problem.

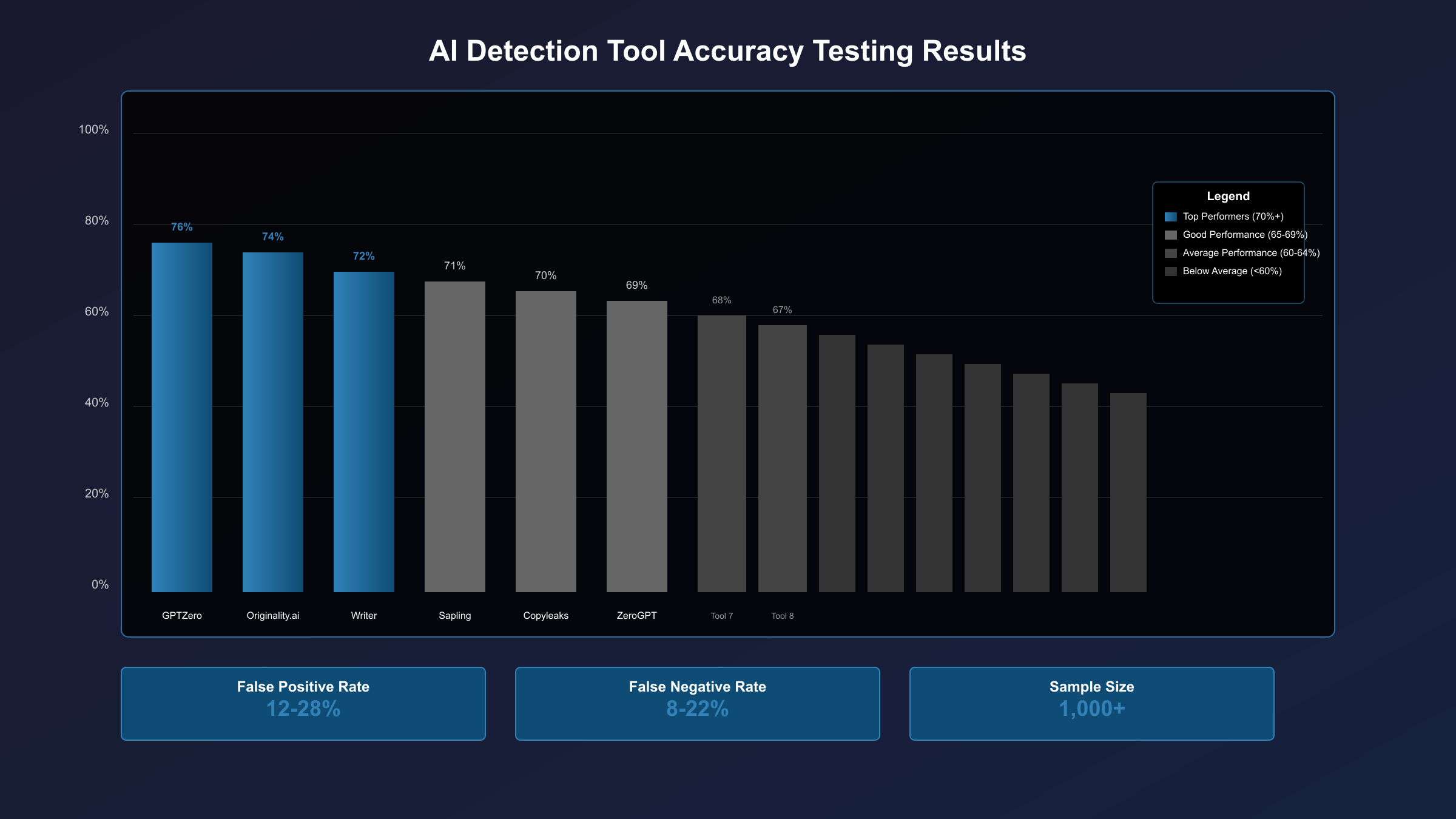

The research picture is still cautious. A 2025 NAACL Findings paper reports that both trained and zero-shot detectors can struggle to maintain useful sensitivity at low false-positive rates, and that moderate attacks can materially reduce performance(Tufts et al., 2026-03-18). In practical terms, that means a detector may look convincing on a marketing page while still failing in unfamiliar domains, on new model outputs, or after ordinary editing passes.

Bias remains part of the story as well. The widely cited 2023 Patterns paper on public GPT detectors found strong bias against non-native English writing, with more than half of a TOEFL essay set misclassified on average by the detectors studied(Patterns, 2026-03-18). That finding does not mean every current commercial tool behaves the same way today, but it is strong enough to justify caution whenever a score is used against multilingual writers or formal academic prose.

Official documentation tends to sound more careful than product marketing, and that difference is instructive. Turnitin tells users the report can be wrong and should not be used alone(Turnitin Guides, 2026-03-18), which you can also confirm in the current Turnitin guidance and release notes. Google’s search guidance says the real ranking question is whether content is created to help people, not whether it merely passes an AI detector(Google Search Central, 2026-03-18), and Google Search Central makes that principle explicit. Together, those signals support a more mature rule: detector scores are about risk screening, not truth certification.

| Evidence type | What it tells you | What it cannot tell you |

|---|---|---|

| Vendor benchmark claim | A company wants to show strong performance | Whether the tool is safe for your exact use case |

| Tool interface result | A draft may deserve closer review | Whether the author cheated or the content should be rejected |

| Research paper | Where detector reliability tends to fail | Which commercial product will be best for your document tomorrow |

| Institutional policy doc | How a score should be used in process | Whether the score alone is correct |

Why a high AI score is not enough for schools, editors, or clients

A high score feels decisive because it compresses uncertainty into a single number. That is exactly why people over-trust it. The number is emotionally efficient. It looks like a result. But when the consequences include grade penalties, rejected work, or accusations of dishonesty, emotionally efficient is not the same thing as operationally defensible.

For schools, the better question is not "Did the detector say 72%?" but "What evidence do we have besides the detector?" A defensible review usually includes draft history, notes, sources, citation behavior, revision timestamps, and direct discussion with the writer. Turnitin’s own guidance supports that principle by refusing to treat the AI report as a sole basis for action(Turnitin Guides, 2026-03-18).

For editors and clients, the same logic holds. A detector can help explain why a draft feels thin, repetitive, or suspiciously uniform, but it should not replace editorial judgment. If the document has solid sourcing, a visible revision trail, and domain-specific reasoning that the author can explain, that evidence usually matters more than a single detector output.

This is also where GPTZero’s process-oriented tooling becomes valuable. A replay or document history does not prove that every sentence was human-authored, but it is often more informative than an isolated risk score. In other words, the strongest evidence is usually not "one more detector." It is a better view of how the text came into existence.

In practice, the most defensible evidence chain is boring rather than dramatic. It is a dated outline, a list of source URLs, visible revision steps, deleted sections, and the writer’s ability to explain why claims were made and why sources were chosen. That kind of record is hard to fake consistently and easy to review calmly. A detector percentage, by contrast, is easy to overreact to because it looks precise even when the underlying judgment is probabilistic.

If you want a broader workflow discussion, our deeper guide to AI content detector strategies goes further into team and classroom policy design. The key point here is simpler: a detector score can trigger review, but it should rarely close the case.

When to upgrade from a free checker to a workflow tool

The right time to move beyond a free AI checker is when you stop asking for convenience and start asking for process. If you need longer document support, multilingual handling, batch review, saved reports, or auditable reviewer workflows, you are no longer shopping for a lightweight checker. You are shopping for a review system.

That is the point where Copyleaks or Turnitin become more relevant than Scribbr or Ahrefs. Copyleaks is the stronger upgrade when you need multilingual review and shareable workflow infrastructure, while Turnitin matters more when a school already has policy and LMS integration in place(Copyleaks, 2026-03-18; Turnitin Guides, 2026-03-18). Free tools are optimized for speed and accessibility. Workflow tools are optimized for scale, documentation, permissions, and institutional use. Those are different jobs, and mixing them up leads to bad expectations.

A useful rule of thumb is this: if the decision will cost someone money, reputation, a passing grade, or publication approval, a free checker should not be your last step. It can still be your first step. But the moment you need a record of why you escalated, who reviewed what, or how multilingual content was assessed, you should treat the free checker as a preliminary signal and move into a stronger process.

Writers who rely heavily on AI drafting should also consider improving the writing record instead of obsessing over the detector number. Version history, source notes, and editorial revisions make the work more defensible and often improve the final quality more than another pass through a checker ever will.

For teams, the transition point is usually obvious once you write the review rubric down. If reviewers need to capture the detector used, the document length, the language, the passages that triggered concern, the second-opinion result, and the final human judgment, you have already moved beyond a casual checker use case. At that point, the organization benefits more from a consistent workflow than from endlessly testing consumer-facing free detectors against each other.

Our recommendation by scenario

The goal is not to crown a universal winner. It is to reduce the chance that you use the wrong detector in the wrong context.

| Scenario | Start with | Upgrade when | Need evidence |

|---|---|---|---|

Students | Scribbr for convenience or GPTZero for process-aware review | The document is long, high-stakes, or flagged by a second reader | Draft history, notes, citations, Writing Replay, revision trail |

Editors | GPTZero or Grammarly for first-pass review | A piece may be rejected, sent back, or challenged | Author explanation, source record, revision log, second detector |

Content teams | Ahrefs for quick snippet checks | You need multilingual review, reports, or batch workflows | Review rubric, workflow logs, Copyleaks or Turnitin process |

If you want the most generous truly free entry point, choose Scribbr. If you want the best free starting point for authorship defense, choose GPTZero. If you want the fastest low-stakes scan for short web copy, choose Ahrefs or Grammarly. If you need a decision that someone might later challenge, stop looking for a magic free checker and build an evidence chain instead.

That conclusion also aligns with SEO reality. Google rewards helpful, people-first content, not content that merely clears an AI detector threshold(Google Search Central, 2026-03-18). So even for publishers and marketers, the better long-term strategy is stronger sourcing, editing, and original judgment, not detector gaming.

If your use case is specifically around AI-assisted academic drafting rather than detection alone, our AI paper writing tools guide is the more relevant follow-up read. If you are earlier in the tool-selection stage, our AI writing tools guide is a better companion piece. Detection is only one side of the workflow; evidence quality and revision quality matter just as much.

FAQ: free AI checker accuracy, false positives, and school use

What is the most reliable free AI checker right now?

For a purely free first-pass checker, Scribbr is the easiest recommendation because it is frictionless and genuinely free for repeated short checks(Scribbr, 2026-03-18). For authorship defense, GPTZero is often the more useful choice because Writing Replay adds process evidence(GPTZero, 2026-03-18). The more precise answer is that reliability depends on the decision you are making, not just on the detector brand.

Can a free AI checker prove that a paper was written by AI?

No. A free AI checker can raise or lower suspicion, but it cannot prove authorship on its own. Even Turnitin says its AI report should not be the sole basis for adverse action(Turnitin Guides, 2026-03-18). In high-stakes cases, process evidence and human review matter more than the raw percentage.

Why do AI detectors still get false positives?

Because detector outputs depend on statistical patterns, not on direct access to author intent. That means formal writing, multilingual writing, edited AI-assisted writing, and carefully revised drafts can all distort results. Research and vendor guidance both show that reliability remains conditional rather than absolute(Tufts et al., 2026-03-18; Turnitin Guides, 2026-03-18).

Is GPTZero better than Scribbr?

It depends on what you need. Scribbr is better when you want a free, no-sign-up, low-friction first check on a short document(Scribbr, 2026-03-18). GPTZero is better when you need process evidence or plan to defend how the text was produced(GPTZero, 2026-03-18). If you only compare detector percentages, you miss the real difference.

Should schools rely on Turnitin AI detection?

Schools can use Turnitin as part of a policy workflow, but not as a shortcut to punishment. Turnitin itself says the report is not sufficient alone and hides sub-20% results to reduce false-positive misuse(Turnitin Guides, 2026-03-18). A school that treats the number as the whole case is using the product more aggressively than the vendor’s own guidance supports.

What should I do if Turnitin shows an asterisk or a score below 20%?

Treat it as a caution signal, not a verdict. Turnitin now withholds exact percentages below 20% because that range has a higher false-positive incidence(Turnitin Guides, 2026-03-18). In practice, that means a low or starred result should push you toward contextual review, not toward stronger confidence.

Can AI humanizers or editing passes beat free AI checkers?

Sometimes, yes. The current research literature still shows that detector performance can degrade under moderate attacks or ordinary editing changes(Tufts et al., 2026-03-18). That is another reason to avoid building policies around the assumption that a detector score is a durable ground truth.

Does passing an AI detector help SEO?

Not directly. Google’s guidance focuses on whether content is helpful, original in value, and created primarily for people rather than for search manipulation(Google Search Central, 2026-03-18). A detector score may be useful internally, but it is not a ranking goal by itself.