Codex vs Claude Code in 2026: Which AI Coding Agent Should You Adopt First?

A current, source-backed comparison of OpenAI Codex and Claude Code focused on workflow fit, pricing, governance, and a practical adoption decision worksheet.

Nano Banana Pro

4K图像官方2折Google Gemini 3 Pro Image · AI图像生成

已服务 10万+ 开发者Comparing Codex and Claude Code used to be a simple feature-table exercise. It is not simple anymore. Both products now sit on fast-moving model families, both vendors are shipping new agent workflows rather than just better autocomplete, and a lot of articles still recycle stale 2025 claims that no longer help a team decide what to adopt.

The useful question in 2026 is not “which one is smarter?” The useful question is “which operating model fits how my team actually works?” Codex increasingly behaves like a delegated cloud agent with broad ChatGPT integration, while Claude Code behaves like a terminal-native coding partner that keeps the human much closer to each action. Those are different workflow contracts, and they create different failure modes.

This guide is written for that decision. It updates the old benchmark and pricing assumptions, fixes the common “local versus cloud” oversimplification, and ends with a worksheet you can use to choose Codex, Claude Code, or a staged hybrid rollout. If Cursor is also on your shortlist, see our three-way comparison.

TL;DR

If your team wants backgroundable cloud execution, easy access through paid ChatGPT plans, and lower API pricing when you do go metered, Codex is usually the better first rollout(OpenAI; OpenAI API docs, verified 2026-03-18).

If your team wants a terminal-native agent, explicit command visibility, and tighter supervision during complex repository work, Claude Code is usually the better first rollout(Anthropic Claude Code docs, verified 2026-03-18).

Do not default to hybrid on day one. Use the decision worksheet below first. Hybrid is strongest when you already have one disciplined workflow and a clear reason to add a second.

The Real Difference Is the Working Contract

The most important update since the original version of this article is that “Codex” and “Claude Code” are products, not static models. OpenAI now positions Codex across the CLI, IDE extension, web, and the Codex app, and says it is included with ChatGPT Plus, Pro, Business, Enterprise, and Edu, with limited-time access on Free and Go and extra credits available when needed(OpenAI Help Center, verified 2026-03-18). Anthropic positions Claude Code as a terminal tool that can edit files, run commands, create commits, and connect to external systems through MCP(Anthropic Claude Code docs, verified 2026-03-18).

That product framing matters because most teams do not buy a model in isolation. They buy a loop. Codex is strongest when you are comfortable delegating a task, reviewing the result, and letting a cloud-hosted agent do more of the intermediate work. Claude Code is strongest when you want the agent inside the repo and terminal workflow, where exploration, command execution, and revision happen in a tighter human-supervised loop.

This is why older comparison tables keep breaking. A table that says “Codex is cloud” and “Claude Code is local” is directionally true but operationally incomplete. The question is not where the UI lives. The question is how much control, isolation, review, and asynchronous delegation your team wants at the moment work is being done.

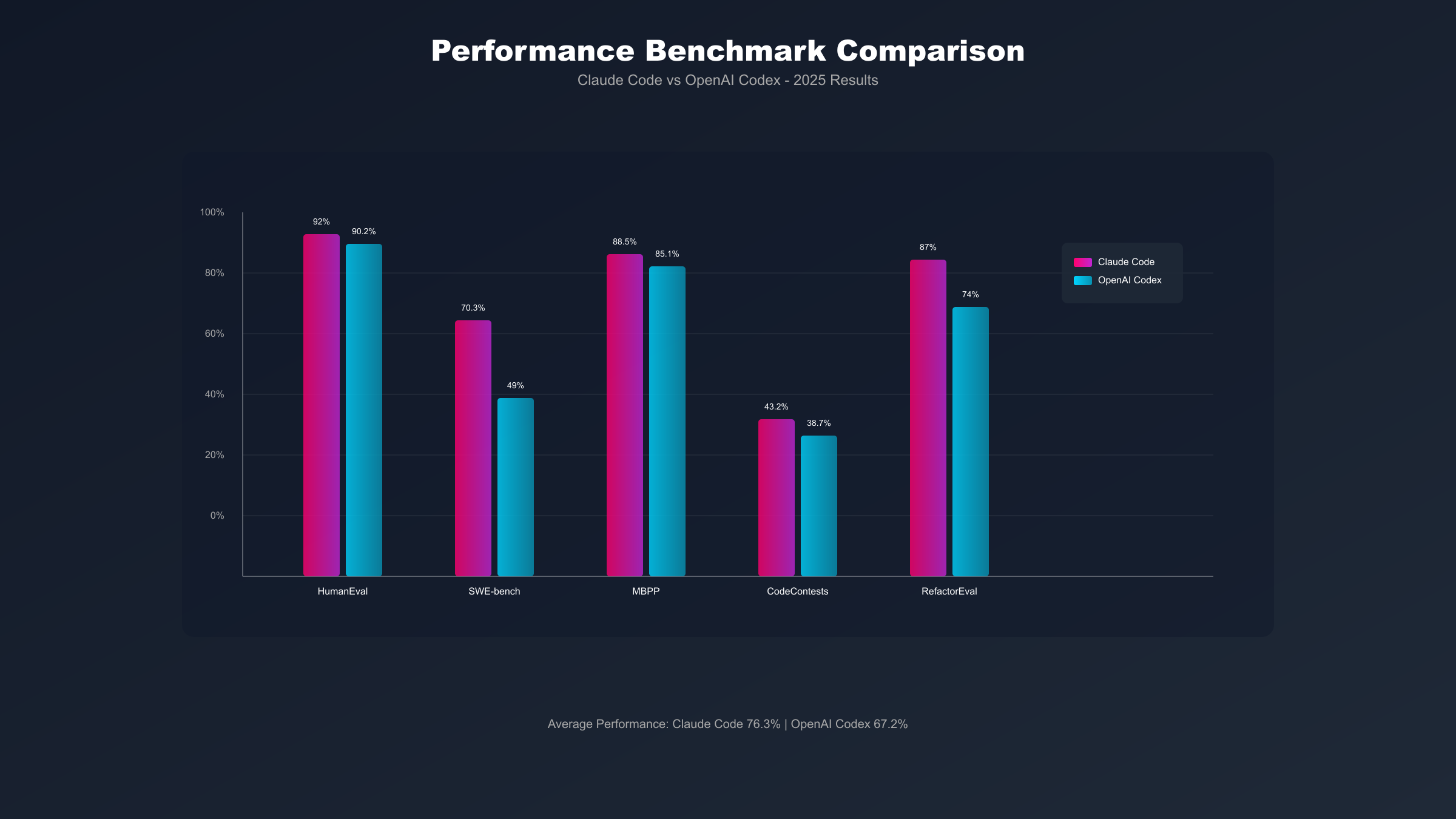

Benchmarks Matter Less Than Most Buyers Think

Benchmarks still matter, but not in the way many comparison posts imply. OpenAI says GPT-5.2-Codex sets a new industry high on SWE-Bench Pro and Terminal-Bench 2.0(OpenAI, verified 2026-03-18). Anthropic’s latest public system-card tables put Claude 4.6-family models in roughly the same serious range, with reported scores spanning 79.6%-80.8% on SWE-bench Verified and 59.1%-65.4% on Terminal-Bench 2.0 depending on the Claude model and Anthropic’s stated setup(Anthropic system cards, verified 2026-03-18). Those are useful signals, but they are not a clean scoreboard because they do not always use the same model, harness, prompt strategy, or benchmark variant.

That last detail is where many articles go wrong. SWE-bench Verified, SWE-Bench Pro, and terminal-oriented evaluations test overlapping but not identical abilities. If one vendor publishes a stronger Verified result and the other emphasizes Pro or terminal execution, you should not flatten those into a fake single-number winner. What you can say with confidence is that both products now sit in the “serious engineering tool” category, and the choice is more about workflow fit than about one vendor being obviously behind.

OpenAI’s API docs currently list gpt-5.2-codex with a 400,000-token context window and a 128,000-token max output(OpenAI API docs, verified 2026-03-18). Anthropic’s model docs say Claude Sonnet 4 can reach a 1M-token context window with the context-1m-2025-08-07 beta header, and that premium long-context pricing applies once input exceeds 200K tokens(Anthropic model docs, verified 2026-03-18). In practice, this means “Claude always has more context” is too simplistic. Context policy, compaction, tool use, and task decomposition now matter almost as much as the raw ceiling.

If you only care about who wins the leaderboard, you will probably choose badly. A team that thrives with explicit terminal supervision can still get more value from Claude Code even if OpenAI posts a stronger result on one benchmark. A team that wants unattended agent loops and light local setup can still get more value from Codex even if Anthropic posts a stronger result on another. Benchmarks should set expectations, not make the purchase for you.

Cost Model: Seat Access, API Burn, and Hidden Overhead

The cost story has changed enough that many older articles are now actively misleading. On the Codex side, OpenAI has moved the default buyer story toward paid ChatGPT access rather than a standalone “Codex subscription” mental model. OpenAI says Codex usage is included for ChatGPT Plus, Pro, Business, Enterprise, and Edu users, and that additional credits can be purchased when needed(OpenAI, verified 2026-03-18). For API-driven teams, OpenAI currently lists gpt-5.2-codex at $1.75 per 1M input tokens and $14 per 1M output tokens, with cheaper cached input pricing(OpenAI API docs, verified 2026-03-18).

Anthropic’s public Claude Code guidance is different. Instead of emphasizing a flat public seat price, Anthropic frames cost around usage behavior: it reports an average Claude Code cost of about $6 per developer per day, with 90% of users staying under $12 daily, and roughly $100-200 per developer per month with Sonnet 4, depending on how intensively the tool is used(Anthropic Claude Code docs, verified 2026-03-18). Anthropic’s API pricing for Claude Sonnet 4 is currently $3 per MTok input and $15 per MTok output(Anthropic pricing docs, verified 2026-03-18).

Here is the cost comparison that actually matters:

| Cost lens | Codex | Claude Code | What it means |

|---|---|---|---|

| Default access path | Included in main paid ChatGPT plans, with limited-time access on Free and Go(OpenAI Help Center, verified 2026-03-18) | Cost is usually managed as usage spend rather than a simple flat-rate public plan story(Anthropic Claude Code docs, verified 2026-03-18) | Codex is easier to trial through an existing ChatGPT footprint |

| API input price | $1.75 / 1M input tokens(OpenAI API docs, verified 2026-03-18) | $3 / 1M input tokens for Claude Sonnet 4(Anthropic pricing docs, verified 2026-03-18) | Codex has the lower posted input rate |

| API output price | $14 / 1M output tokens(OpenAI API docs, verified 2026-03-18) | $15 / 1M output tokens for Claude Sonnet 4(Anthropic pricing docs, verified 2026-03-18) | Posted output pricing is close, but workflow token use still matters |

| Real-world spend guidance | Extra credits are available when bundled usage is not enough(OpenAI, verified 2026-03-18) | Average ~$100-200 per developer per month with Sonnet 4 for team usage, with wide variance(Anthropic, verified 2026-03-18) | Claude Code cost discipline depends more on usage style than many seat-price tables suggest |

The hidden cost is not just tokens. It is workflow friction. Codex is often cheaper to introduce because the seat-access story is simpler and the delegated-agent model can create visible “work completed while I was elsewhere” value early. Claude Code can be cheaper than people expect for disciplined users, but the spend curve is more sensitive to how deeply your developers stay in long-running terminal sessions. If you want a deeper Claude API breakdown, see our Claude API pricing guide.

Security and Governance: Where the Boundary Actually Is

The original version of this article overstated the privacy difference, and that is one of the most important corrections in this update. Claude Code does run inside the local machine and terminal workflow, but Anthropic’s docs are explicit that prompts and outputs still travel over the network to interact with the model. Anthropic also says commercial users are not used for model training by default, with standard retention of 30 days and zero-data-retention options for appropriately configured API keys(Anthropic data usage docs, verified 2026-03-18).

Codex, on the other hand, is not just “cloud” in a vague sense. OpenAI describes Codex agents as operating with system-level sandboxing, default limits on file edits and cached web search, and explicit permission requests for commands that need elevated access such as network access(OpenAI, verified 2026-03-18). That makes Codex easier to reason about than many people assume, but it is still fundamentally a cloud-mediated execution model.

So the right governance question is not “which product is private?” The right question is “which boundary is acceptable for this code and this team?” If your policy rejects cloud-hosted execution entirely, the answer tilts strongly toward Claude Code first. If your team is comfortable with cloud sandboxes and wants a review-after-the-fact workflow, Codex can be the better fit. If your governance posture is nuanced, you may end up with a split model: Codex for lower-sensitivity feature work and Claude Code for the areas where supervised local terminal work is easier to approve.

Implementation Traps and Onboarding Costs

Most bad tool choices do not fail on day one. They fail in week three, when the novelty disappears and the workflow mismatch becomes obvious. That is why onboarding cost matters almost as much as model quality. A team can adopt the “better” tool on paper and still lose if the daily loop clashes with how the developers scope work, review code, and recover from mistakes.

Codex is easiest to underestimate when a team treats it like a faster ticket-taker. Delegated cloud execution sounds efficient, but it magnifies ambiguity. If the task description is vague, the repo standards are inconsistent, or code review only starts after a large change arrives, the team will feel like Codex produces “surprising” work when the real problem is underspecified delegation. Codex tends to reward teams that already know how to write bounded tasks, define acceptance checks, and review results asynchronously. Without those habits, the agent can feel more expensive than it really is because the rework happens downstream.

Claude Code creates the opposite failure mode. Teams often assume it will feel naturally safer because it lives in the terminal and keeps the human closer to each command. That is true for shell-native developers, but it is not automatically true for everyone else. If the team does not think in terms of repository exploration, command-line iteration, and stepwise debugging, Claude Code can feel slower than the benchmark narrative suggests. The tool is powerful, but it asks the developer to stay engaged in the loop. For some teams that is a feature; for others it becomes an adoption tax.

The context-window debate also hides a practical onboarding issue. A larger context ceiling does not rescue a messy repo, vague coding standards, or poor task decomposition. Codex benefits when you slice work into bounded requests the agent can complete and return. Claude Code benefits when the local repo, test commands, and shell utilities make exploration efficient. In both cases, the surrounding engineering discipline still matters. Teams often blame the agent for problems caused by repository hygiene.

Here are the rollout traps worth watching for:

| Trap | What it looks like in practice | Better response |

|---|---|---|

| Delegation without task hygiene | Codex outputs large patches that reviewers reject late | Shorten task scope, require acceptance checks, and review earlier |

| Terminal tool without terminal fluency | Claude Code sessions stall because the user does not know how to steer the shell workflow | Pair initial users with engineers already comfortable in CLI-heavy debugging |

| Context myth | The team assumes “bigger context” will solve cross-file confusion | Improve repo docs, commands, and task framing before changing tools |

| Hybrid by status signaling | Two tools are adopted because leadership wants “best of both” without clear ownership | Make one tool the default and define a narrow escalation path to the second |

This is why I do not recommend using price or benchmark rank as the first filter. The first filter should be whether your developers are more likely to delegate work or collaborate live with the agent. The second should be whether your review system catches problems early enough for that working style. Only after those answers are clear does cost become a reliable tie-breaker.

There is also a subtle management difference between the two products. Codex tends to create value that is easy for managers to see: tasks can be assigned, background work completes, and completed changes appear ready for review. Claude Code tends to create value that is easier for senior developers to feel: fewer context switches, faster repo exploration, more precise iteration during difficult debugging or refactoring work. Neither is “better” in the abstract, but they make their value visible in different ways. That matters when you are trying to get team-wide buy-in instead of just pleasing power users.

Decision Worksheet: Which Tool Should You Adopt First?

This is the section most comparison pages still do not provide. Score each row for the tool that sounds more like your team. Add 2 points for a strong match and 1 point for a partial match.

| Decision factor | Codex signal | Claude Code signal |

|---|---|---|

| How work starts | “We want to hand off tasks and review completed work later.” | “We want the agent beside us while we explore and edit.” |

| Where trust lives | “Cloud sandboxing is acceptable if permissions are explicit.” | “We prefer the terminal and local repo to stay central to execution.” |

| Budget shape | “Bundled seat access and lower posted API rates matter most.” | “We can tolerate more variable usage if the workflow is a better fit.” |

| Repository shape | “Tasks are separable enough to delegate in parallel.” | “Changes often require step-by-step understanding across interdependent files.” |

| Team behavior | “We already review after execution and are comfortable with asynchronous workflows.” | “We prefer approving, steering, and iterating during execution.” |

Interpret the score like this:

8-10 Codex points: choose Codex first.8-10 Claude Code points: choose Claude Code first.6-7 split points: run a staged hybrid pilot only if you already have written review rules and one person to own each workflow.5 or less on both sides: do not go hybrid yet. Pilot one tool for two weeks, then rescore.

Two override rules matter more than the score. First, if cloud execution is not acceptable for the target repository, choose Claude Code first regardless of convenience. Second, if your team will not work in the terminal and does not want to supervise tool use closely, choose Codex first even if Claude’s benchmark story looks attractive.

That is the real decision artifact. It turns the comparison into a workflow choice instead of a branding choice.

Recommended Scenarios

Once you use the worksheet, the scenarios become clearer.

| Team situation | Better first choice | Why |

|---|---|---|

| Small product team shipping lots of bounded features | Codex | Delegation, bundled access, and asynchronous review create value quickly |

| Platform or infrastructure team living in shell-heavy workflows | Claude Code | Terminal-native work and visible command flow match existing habits |

| Enterprise team with mixed code sensitivity | Claude Code first, then selective Codex | Governance is easier when the stricter path is established before adding cloud delegation |

| Team already disciplined in code review and task ownership | Hybrid can work | You can assign Codex to bounded implementation and Claude Code to deeper local investigation without chaos |

The biggest mistake is choosing hybrid because it sounds sophisticated. Hybrid is only better when the team already knows which tool owns which job. If you do not have that clarity, hybrid usually means duplicated prompts, duplicated spend, and more arguments about which tool “should have done it.” If you want a broader market picture that includes IDE-centric workflows, the more complete read is our Codex vs Claude Code vs Cursor guide.

A Safe Hybrid Rollout, If You Really Need One

The right hybrid rollout is narrow, not ambitious. Start with one primary tool and one backup use case. A good example is Codex as the default for bounded implementation tasks and Claude Code as the fallback for terminal-heavy debugging, migration analysis, or high-touch repository surgery. That keeps the second tool from becoming an expensive identity statement.

The second rule is to define ownership. If a task starts in Codex, decide when it must be escalated to Claude Code. If a task starts in Claude Code, decide when it should be converted into a more delegated Codex job. Teams that skip this step usually blame the tools for what is really a workflow-design failure.

The third rule is to measure one thing that matters. For Codex, that might be “how many tasks finished without local babysitting.” For Claude Code, it might be “how many complex local changes landed without rework.” If you only track anecdotal delight, both tools will look amazing and you still will not know what to standardize.

My practical recommendation is simple: choose one tool as the default, live with it long enough to learn its failure modes, and only then add the second. Teams that reverse that order usually spend more time comparing agents than shipping software.

FAQ

Is Codex cheaper than Claude Code?

Usually, but not in every workflow. Codex has the lower posted API price on both input and output for the current gpt-5.2-codex listing, and OpenAI also bundles Codex access into paid ChatGPT plans(OpenAI; OpenAI API docs, verified 2026-03-18). Claude Code, however, is not well described by a flat seat-price comparison because Anthropic’s own guidance emphasizes daily and monthly usage variance rather than a simple “one subscription equals one predictable cost” story(Anthropic, verified 2026-03-18). If your developers spend hours in long terminal sessions, the real Claude spend can rise faster than a casual pricing table suggests.

Is Claude Code actually local and private?

It is local in execution style, but that is not the same thing as “nothing leaves your machine.” Anthropic’s docs say Claude Code runs in the local environment, while prompts and outputs still traverse the network to reach the model. Anthropic also says commercial users are not used for model training by default and documents retention controls including zero-data-retention for appropriate API setups(Anthropic data usage docs, verified 2026-03-18). That makes Claude Code easier to govern for many teams, but it does not magically eliminate data-boundary questions.

Are benchmarks enough to choose between them?

No. Benchmarks are useful for filtering out weak tools, but both Codex and Claude Code are already past that filter. The better question is whether your team wants delegated cloud execution or supervised terminal-native work. OpenAI and Anthropic publish strong but not perfectly comparable benchmark evidence, so a buyer who treats the benchmark table as the whole decision is likely to overweight the wrong variable.

When should a team choose hybrid from the start?

Rarely. Choose hybrid from the start only if you already have written task boundaries, clear ownership, and at least one person who can teach each workflow. Without those controls, hybrid usually adds coordination overhead before it adds capability. For most teams, the better sequence is one default tool first, then a narrow second-tool expansion once the first workflow is stable.

What if my team already uses Cursor?

Then the question becomes less about “which tool is best” and more about “which gap is still unsolved.” If Cursor already covers day-to-day IDE assistance, Codex often adds value when you want more delegated background execution, while Claude Code often adds value when you want deeper terminal-native investigation. That is why the right follow-up read is our Cursor vs Codex vs Claude Code comparison, not another two-tool benchmark recap.

Should a team standardize on one vendor contract first?

Usually yes. Standardizing on one default contract first reduces two forms of waste: procurement waste and behavioral waste. Procurement waste happens when leadership buys two overlapping tools before anyone has shown which workflow actually fits the team. Behavioral waste happens when developers split into camps, prompts get duplicated across tools, and nobody can explain why one task should start in one product instead of the other.

Codex makes a one-contract-first rollout relatively easy because OpenAI bundles access into multiple paid ChatGPT plans and lets teams expand with credits if usage grows(OpenAI, verified 2026-03-18). Claude Code can also work well as a first standard, but only if the team genuinely wants the terminal-native workflow and is prepared for usage patterns that Anthropic itself describes as variable rather than flat and predictable(Anthropic Claude Code docs, verified 2026-03-18).

The practical rule is simple: standardize first on the workflow you want most of the team to learn, not on the tool that looks strongest in a single benchmark or demo. If most of your work should become delegated, review-driven, and asynchronous, standardize on Codex first. If most of your work should remain repo-local, interactive, and shell-centered, standardize on Claude Code first. Add the second vendor only when the missing use case is real and recurring.

Final Verdict

If I had to make a first-buy recommendation for most software teams today, I would choose Codex when the team wants asynchronous delegation, simpler paid access through ChatGPT, and a workflow that turns AI into background execution. I would choose Claude Code when the team wants a terminal-first agent, closer supervision, and better alignment with developers who already think in shell commands and repository exploration. I would choose hybrid only after those preferences are explicit, not before.

That is the core update this article needed. The choice is no longer mainly about who can post the prettiest benchmark chart. It is about which working contract your team can actually sustain.